Die Plattform Green IT Switzerland wurde 2019 auf Initiative der Fachgruppe Green IT SI (Schweizer Informatik Gesellschaft) und dem Schweizerischen Verband der Telekommunikation (asut) gegründet.

Ziel der Plattform ist,

- das Verständnis und Wissen bezüglich des Energie- und Ressourcenverbrauchs sowie weiterer Nachhaltigkeitsaspekte zu verbessern,

- energieeffizientere und nachhaltigere Lösungen für den Betrieb von IT-Infrastrukturen zu praktizieren (Green in IT),

- und den Mehrfachnutzen von smarten, digital unterstützten Servicemodellen besser zu verstehen und zu nutzen (Green by IT).

Green in IT

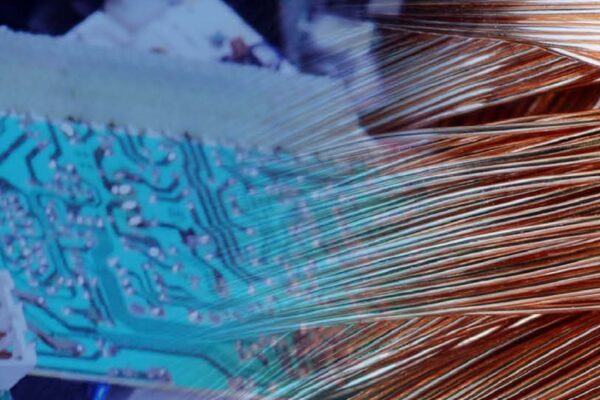

«Green in IT» fokussiert auf in-house Massnahmen wie den Einkauf und Betrieb von energieeffizienten IT Geräten, Servern und Netzwerkinfrastruktur. Ebenso wichtig sind die dazugehörigen IT-Prozesse sowie die Programmierung von Software, die einen tiefen Energieverbrauch verursacht.

Aus einer gesamtheitlichen Perspektive betrachtet, geht es um einen verantwortungsvollen Betrieb und Unterhalt der Infrastruktur, der alle Nachhaltigkeitsaspekte berücksichtigt.

Wie beurteilen Sie Ihre in-house Massnahmen? Machen Sie den Test mit unserem Massnahmenkatalog. Jetzt Massnahmenkatalog aufrufen…

Green by IT

«Green by IT» befasst sich mit den Auswirkungen auf die Nachhaltigkeitsaspekte von IT unterstützten Anwendungen und Dienstleistungen in den Bereichen Smart Home, Smart Building und Smart City.

Im Fokus liegen die Mehrfachnutzen von smarten, digital unterstützten Servicemodellen bei der ICT-Branche, Serviceprovidern, FM-Providern und Smart Cities. Dazu gehören Vereinfachungen bei der Prozessabwicklung, betriebliche Kosteneinsparungen und ein tieferer Energie-/ Ressourcenverbrauch (Footprint).

Kurz zusammengefasst ist das Schlagwort «greener dank smart». Die smarten Technologien helfen, unseren Energie- und Ressourenverbrauch zu vermindern.

Die Umweltauswirkungen der ICT explodieren

Jahresversammlung 2019

What’s up in Green IT, 2018